Partial correlation r 12.34 equal to uncontrolled correlation r 12 No effect of control variables Partial correlation near 0 Original correlation is spurious. For example r 12.34 is the correlation of variables 1 and 2, controlling for variables 3 and 4.

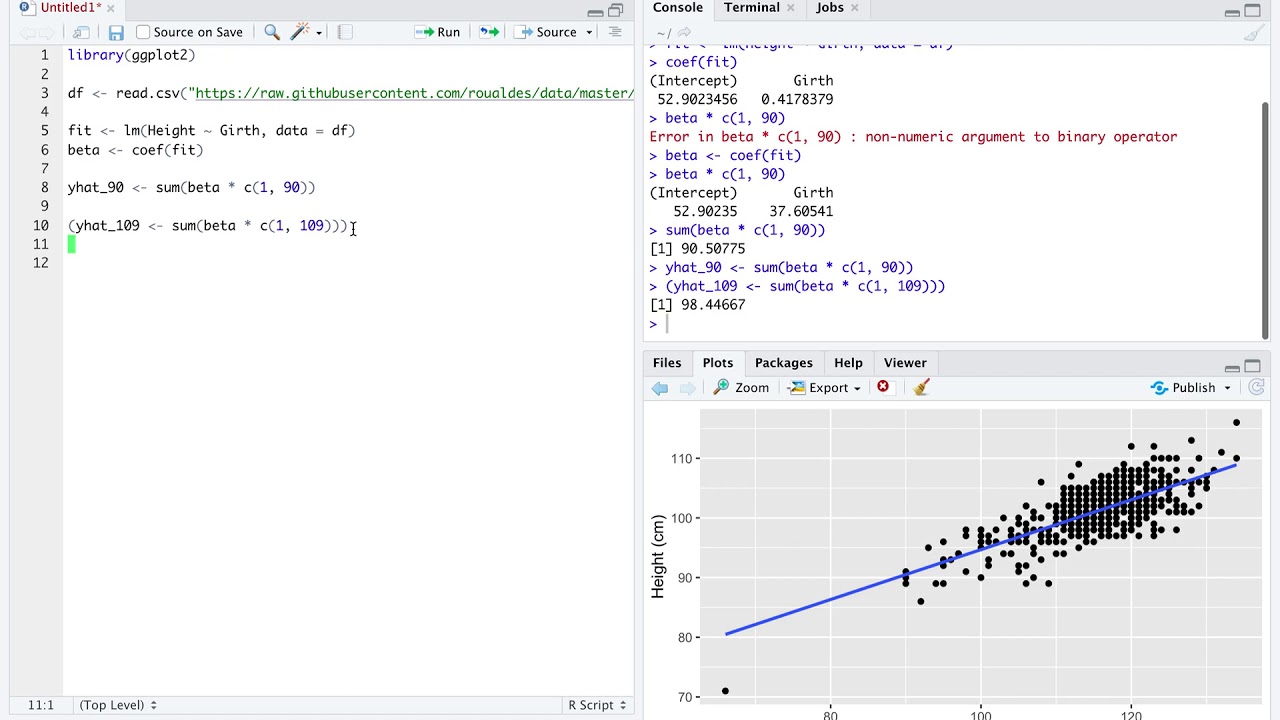

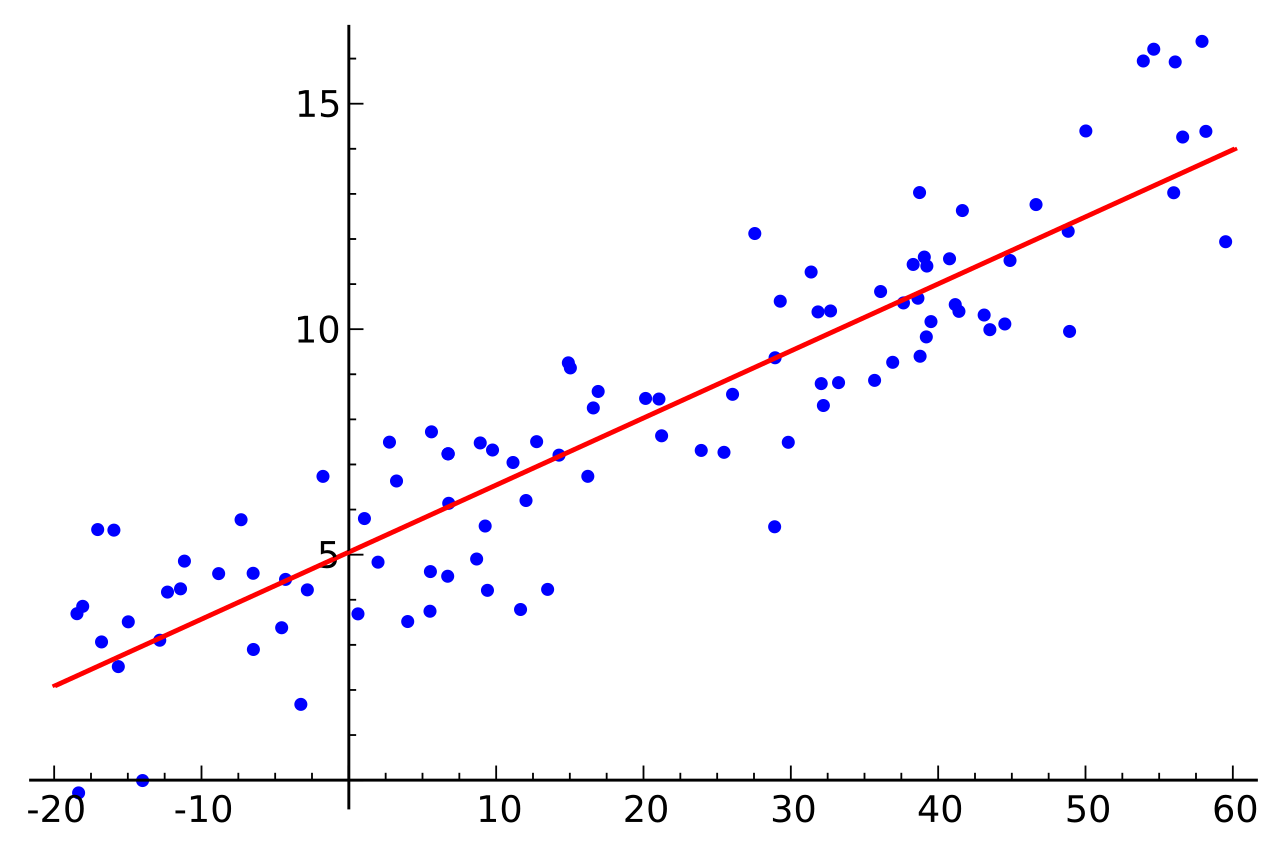

SSE = error sum of squares = ( Yi - Est Yi) 2 where Yi is the actual value of Y for the i th case and Est Yi is the regression prediction for the i th case.It is also called the coefficient of multiple determination. Multiple R 2is the percent of the variance in the dependent variable, explained by the independent variables.A small value indicates that there is little or no linear relationship between the dependent variable and the independent variables. Multiple R: The correlation coefficient between the observed and predicted values.Notice that the slope ( 0.541 0.541) is the same value given previously for b1 b 1 in the multiple regression equation. For b coefficients for dummy variables, which have been binary coded (the usual 1=present, 0="not" present), b is relative to the reference category (the category left out). The linear regression equation for the prediction of UGPA U G P A by the residuals is. Interpretation of b for dummy variables. Example 2: Find Equation for Multiple Linear Regression.To prevent perfect multicollinearity, one category must be left out. Nominal and ordinal categories can be transformed into sets of dichotomies, called dummy variables. Dummy variables: Regression assumes interval data, but dichotomies may be considered a special case of intervalness.Residuals are the difference between the observed values and those predicted by the regression equation.The result is that all variables have a mean of 0 and a standard deviation of 1. Standardized means that for each datum the mean is subtracted and the result divided by the standard deviation.The ratio of the beta weights is the ratio of the predictive importance of the independent variables. Beta is the average amount by which the dependent variable increases when the independent variable increases one standard deviation and other independent variables are held constant. Beta weights are the regression coefficients for standardized data.Each regression coefficient represents the net effect the i th variable has on the dependent variable, holding the remaining x's in the equation constant. Regression coefficient: Regression coefficients bi are the slopes of the regression plane in the direction of x i.It is equal to the estimated Y value when all the independents have a value of 0.

Intercept: The intercept, b 0, is where the regression plane intersects with the Y-axis.Ordinary least squares: This method derives its name from the criterion used to draw the best-fit regression line: a line such that the sum of the squared deviations of the distances of all the points to the line is minimized.The parameters of the regression equation are estimated using the ordinary least squares method ( OLS). Where Y is the dependent variable, the b's are the regression coefficients for the corresponding x (independent) terms, b 0 is a constant or intercept, and e is the error term reflected in the residuals.

In multiple regression analysis, the relationship between one dependent variable and several independent variables (called predictors) is analyzed.